How do you choose which tasks to automate?

📋 Summary

I think we developers have an important mission. But the importance of this mission forces us to be rigorous and to question ourselves.

🦸 The developer’s mission #

A few weeks ago, a colleague and I were working on a presentation about IT professions. Specifically, we were introducing the roles of developers and architects to aspiring young engineers.

As I contemplated the core mission of a developer, it struck me:

Our job is to automate things.

Indeed, we automate real-world business processes and various computer tasks.

Truth be told, software’s primary aim is to save time and, consequently, money for clients. Considering how much time a service saves for its clients helps determine the price they’re willing to pay. Additionally, reducing the risk of errors holds real value.

An excellent example is Rebase by Pieter Levels, aiding entrepreneurs in moving to Portugal. His service automated an administrative process, offering expertise and saving time for clients. 🤝

We noted that this inclination towards automation is intense among developers. Everything should be automated. In fact, in my recent design principles, I even launched:

Automate all the things!

It’s an extreme application of the DRY (Don’t Repeat Yourself) principle, but applied to task execution. Some developers even have a principle of never doing the same thing twice. Personally, I question it after the second time (somewhat akin to Write Everything Twice).

🛠️ Automating everything – a flawed idea? #

Yet, this week, reading Olivier’s tweet, I remembered I held a more moderate view on automation.

Olivier emphasizes what to automate and the quality level to deliver. Between Quick and Dirty and a fully polished development, the effort differs. In a fast-paced, competitive world, finding a balanced approach seems prudent.

I revisit his approach later in the article, taking an extreme viewpoint. But let’s focus now on a topic close to my heart: what tasks should truly be automated?

🕵️ Identifying tasks for automation #

In a world with finite resources, automating everything isn’t feasible. Hence, choices must be made. Here, I present a tool in the form of an assessment grid to prioritize task automation.

📊 Evaluation grids #

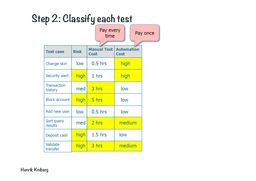

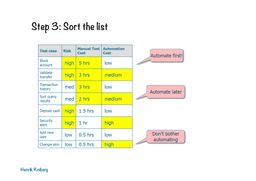

🧪 Test classification #

Nearly 10 years ago, Henrik Kniberg’s test classification in his presentation “What is an agile tester?” struck me. This classification prioritizes tests for automation based on the risk of functionality failure, automation effort, and manual testing cost. In truth, it also lacks test frequency consideration.

A feature with high risk, lengthy manual testing, and low automation effort should take precedence over a feature with low risk, minimal manual execution effort, and significant automation effort.

Here, the calculation of ROI (Return on Investment) from test automation is evident.

Although the author touches on risk, it’s not fully developed.

💥 Risk matrix #

Automation isn’t just about speed and productivity. It’s also about making operations safer and reducing the potential for error.

Hence, I can’t help but link this to the risk matrix, which includes:

- Probability

- Impact/Severity

This grid is used in risk management, useful for security assessments or probable risks in a hosting or data center.

I also apply it to assess tasks for automation. What’s the probability of an error in a manual task? What’s the impact of that error?

Consequently, some tasks with extremely low execution frequency but critical error impacts might be automated. For instance, infrastructure creation – what if you lose your entire production infrastructure? How long is an acceptable timeframe to rebuild it error-free? So even if this operation will only be performed once or twice, you’ll want to automate it because of its criticality.

🧑🏫 Practical implementation #

🧑💼 Work automation prioritization #

By combining these two evaluation grids, a robust tool emerges to prioritize tasks for automation (not just tests). It’s what we practice daily at work. For each task open to automation, we inquire:

- Manual execution cost?

- Automation effort?

- Execution frequency?

- Risk of manual error?

- Expected execution time?

Answering these five questions allows us to make informed decisions on task automation.

🧑💻 Personal automation prioritization #

Personally, I’ve taken to jotting down everything I do, a continuous documentation of sorts[1]. I maintain a kind of notebook for each task, enabling me to replay it manually with less need for contemplation and with a grip on the error risk (though still fallible). For repetitive tasks, I ponder:

- Time spent on manual execution?

- Task execution frequency?

- Time needed for task automation?

- Observed manual error rate for this task?

Using these four questions, I decide whether to automate a task.

⚡ Taking it further with eXtreme Lean #

Finally, let’s revisit Olivier’s thoughts:

“We can’t automate everything.”

“We don’t have time to do everything perfectly or even neatly.”

About 2 years ago at BreizhCamp, I attended a fascinating talk by François-Guillaume Ribreau and Sébastien Brousse, explaining how they took the principles of lean startup and eXtreme Programming to the extreme with what they call eXtreme Lean. It heavily relies on Fake it till you make it.

Their approach is pragmatic – no need to code features perfectly until client traction is proven. However, they do not compromise on test automation. It’s the eXtreme Programming aspect, providing agility with a safety net.

⚖️ Conclusion #

In summary, yes, our mission is to automate everything. But we lack infinite resources to achieve that. Prioritization is essential. Thus, calculating the ROI of each automation becomes necessary. And it will help us to find the right balance.

So let’s automate tasks that genuinely save us time within our capacity to produce, and leave ourselves the means to conquer the world[2].

I elaborate more on this in my post about the Zettelkasten method - what I learned about the journey of note-taking. ↩︎

Or at least our market.😜 ↩︎